Node Unblocker for Web Scraping: Setup, Usage, and Limitations Explained

Two common challenges businesses and researchers face when web scraping are geo-restrictions and IP bans, especially when the target sites have advanced ant-bot systems. Node Unblocked helps bypass such internet restrictions, providing a seamless web scraping process. This proxy tool reroutes your traffic through a remote server to make your scraping traffic anonymous.

If you’re keen to learn more about how Node Unblocker works and how to set it up, keep readWeb Scrapinging. In this comprehensive guide, we will explore how Node Unblocker works, steps for deploying it, its limitations, and more. So, without further ado, let’s dive right in!

Key Takeaways

- Middleware-Based Proxying: Node Unblocker functions as a lightweight Node.js middleware to enable access to blocked content. It is meant to allow users access to target sites while masking their real IP address, which is crucial for bypassing internet restrictions such as IP blocks.

- Real-Time Link Rewriting: Web unblockers like node-unblocker dynamically modify HTML, CSS, and JS to keep your browsing session entirely within the Node Unblocker proxy. This makes web scraping traffic more anonymous and hard to detect for the target websites, especially when used with dedicated proxies.

- Simple Setup & Deployment: Setting up and deploying this proxy solution is pretty straightforward. This guide will help you discover how to launch it in minutes using tools like Express and hosting services like Render.

- Enhanced Web Compatibility: WebSockets allows this proxy solution to handle modern, interactive sites that traditional proxy solutions break. This is crucial when web scraping modern websites with sophisticated user interfaces.

- Critical Security Hurdles: Modern security systems such as Cloudflare and CAPTCHAs often block http requests from standard node-unblocker setups.

- The Rotation Gap: Node Unblocker does not offer built-in IP rotation, which increases the chances of IP bans, when accessing platforms that require signing in or stable sessions.

- Professional or DIY: Before choosing, you will need to compare the maintenance-heavy nature of self-hosting against the “set and forget” reliability of dedicated proxy networks.

- Strategic Integration: Node-unblocker supports various automation and web scraping solutions like Playwright.

What Is Node Unblocker?

Node Unblocker is an open-source web proxy library built as middleware for Node.js. Its primary purpose is to bypass internet restrictions and network filters by acting as proxy that processes and unblocks web content for the end user. As will be covered later in the article, it can be used alongside tools such as Playwright to enable a more seamless and restricted web scraping experience.

How Does Node Unblocker Work?

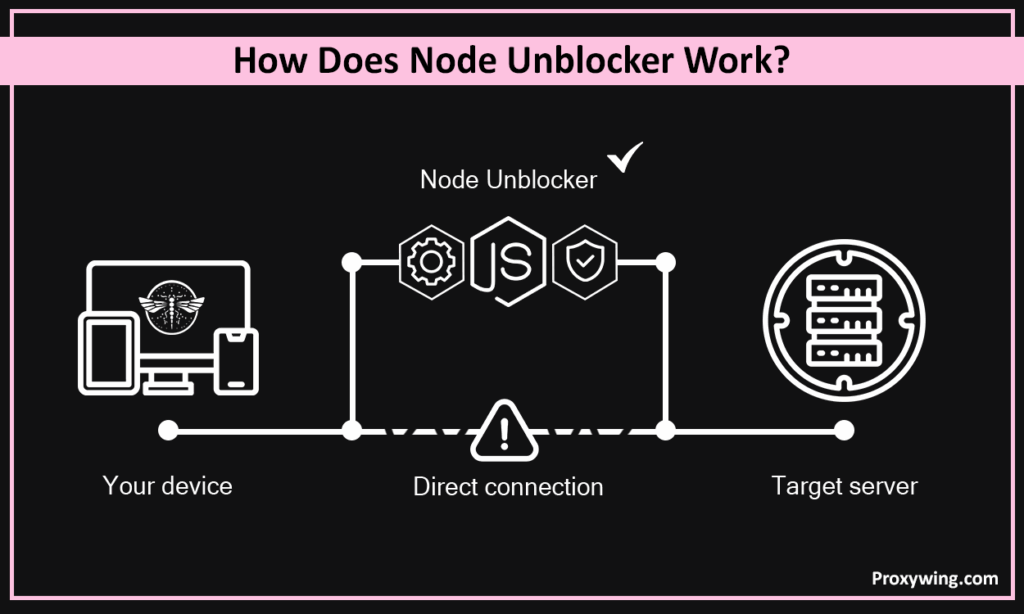

Your scraping activity is at risk if you connect directly to a target server with your device or local network IP address. Node unblocker is built to solve this issue. It is basically a proxy that works by intercepting a web scraping request, fetching data from a target website via the server, and rewriting the response.

It dynamically modifies HTML, CSS, and JS links so that all subsequent clicks and resource loads routes through the proxy’s domain rather than trying to connect directly to the blocked sites. When you leverage this proxy solution, your real IP address and location are hidden for the target servers.

Node Unblocker Prerequisites

Installing Node.js

Download the Node.js from nodejs.org. After the download, run the installer on your machine. Run the node -v and npm -v command using terminal (Linux and macOS) or PowerShell on Windows PCs to ensure the runtime and package manager are active.

Creating a New Project

Create a dedicated JS project folder to separate it from other files and allow npm to track the dependencies.

Use File Explorer on your machine or run the command below to create the folder:

mkdir my-proxy-server

cd my-proxy-serverNavigate to the folder you create above and run the common below:

npm init -yThe above command initializes a package.json file for managing the project metadata.

Installing Critical Dependencies

To build the unblocker, you need two specific libraries, which are the proxy engine itself and a web server framework.

Install the Node Unblocker proxy engine and the web framework:

npm install node-unblocker expressIn the above command, express is the web server’s Node.js web application framework and node-unblocker is the engine that handles the advanced logic that fetches external sites and rewrites their URLs.

Setting Up Node Unblocker (Step-by-Step)

Step 1: Create the Proxy Script

Create a file named server.js:

const express = require('express');

const Unblocker = require('node-unblocker');

const app = express();

const unblocker = new Unblocker({ prefix: '/proxy/' });

app.use(unblocker);

app.listen(8080, () => console.log('Proxy running on port 8080'));The above Javascript script initializes Express, attaches the Unblocker middleware with a specific URL prefix, and boots the server.

Step 2: Start the Local Server

Run the node server.js command to start the cloak server. The confirmation message will be shown in the CLI if the command is successful.

Step 3: Test Proxy in a Browser

You can visit http://localhost:8080/proxy/https://www.google.com to test if the proxy works as expected. If the page loads, your proxy is successfully rewriting traffic.

How to Leverage Node Unblocker In Web Scraping

Using Puppeteer For Scraping

For those who may not know, Puppeteer is Node.js library that provides a high-level API for controlling headless browsers using DevTools Protocol. This automation tool can be used alongside Node Unblocker for effective web scraping. To configure Puppeteer to redirect traffic through your local node-unblocker instance use the script below:

const browser = await puppeteer.launch({

args: ['--proxy-server=http://localhost:8080']

});Scraping with Playwright

It is an open-source automation library for browser testing, web scraping processes, and other similar tasks. The script below shows how to set up the proxy in the launch options with Playwright.

const browser = await chromium.launch({

proxy: { server: 'http://localhost:8080' }

});Using Node Unblocker as an Internal Middleware Proxy

Node Unblocker hides the scrapers IP address which helps bypass any restrictions implemented by the target servers. Developers usually integrate it directly into scraping pipelines to rotate IPs or mask the scraper’s origin without needing a third-party commercial proxy service.

Deploying Node Unblocker to a Remote Server

Node Unblocker runs on a remote server, which ensures that your proxy remains accessible 24/7 and provides a consistent public IP address.

Deploying to Render

Render enables straightforward integration with GitHub, thanks to its developer-friendly interface. Follow the steps below to deploy it:

Step 1: Prepare The Repository

To get started, you must ensure your project folder contains a package.json with a defined start script: “scripts”: { “start”: “node server.js” }.

Create a .gitignore file and add node_modules/ to prevent uploading thousands of unnecessary files. Push the code to a GitHub repo.

Step 2: Create a New Web Service:

Log in to your Render Dashboard and click New + > Web Service.

Connect your account (GitHub) and select the repository you created in step 1.

Step 3: Configure and Launch:

- Runtime: Select Node.

- Build Command: npm install.

- Start Command: node server.js or npm start.

- Environment Variables: When a script uses a specific port, Render automatically detects the PORT value. Confirm that your code uses process.env.PORT || 8080.

Deploying via Heroku CLI

Heroku allows developers to deploy directly from the terminal. Follow these three steps for deploying Node Unblocker using Heroku:

Step 1: Installation and Login

Download Heroku for your OS and run the heroku login command after. This will open a browser window to authenticate your user account.

Step 2: Create the Heroku App

Go to your project folder and run the heroku create your-unique-app-name command where “your-unique-app-name” is the app name of your choice. The above command will create a new app on Heroku and add a “remote” called heroku to the local Git configuration.

Step 3: The Procfile and Deployment:

Heroku requires Procfile (a file name with no extension) in your root directory. The contents of this file should be: web: node server.js.

To commit your changes, run the git add . and git commit -m “Prepare for Heroku” commands. Finally deploy using the git push heroku main command.

Acceptable Use Policy Considerations

Before deploying a proxy for bypassing restrictions, you must review your hosting provider’s terms of service. There are hosting providers that prohibit public proxies due to their high bandwidth usage. You must also ensure your execution for bypassing network restrictions does not violate local data privacy laws.

Node Unblocker Limitations

No Built-in IP Rotation

By default, Node Unblocker routes all traffic through the single IP address of the host server. The lack of rotation makes it easy for blocked websites to detect or block a connection if their systems detect multiple requests coming from the same IP address simultaneously. Accessing blocked websites requires using node-unblocker along with residential proxies that offer more trusted IPs in large quantities. Another alternative is configuring different node-unblocker instances to use unique IPs, which is expensive.

Blocked by Advanced Anti-Bot Systems

Modern security solutions like Cloudflare use TLS fingerprinting and other advanced techniques to detect proxies. Node Unblocker often fails to mimic real web browsers, which can lead to permanent blocks or impossible CAPTCHAs. This makes it a less reliable option for handling web scraping projects or accessing content from JS applications and websites with strict anti-bot systems.

OAuth and Login Flow Issues

Because the tool rewrites URLs and modifies headers, it often breaks OAuth flows and session cookies, making it unreliable for handling web scraping data behind logins or navigating authenticated user paths.

High Maintenance Effort

Web standards change rapidly. Node Unblocker often uses complex pattern matching to find and replace URLs within a website’s source code. Keeping this “rewriting” logic functional requires constant debugging and manual updates to avoid the proxy from breaking when a target site updates its JavaScript or if they switch to a different JS library.

Node Unblocker vs. Dedicated Proxy Servers

| Feature | Node Unblocker | Dedicated Proxy Servers |

| IP Pool | Single IP | Millions (Residential/ISP) |

| Bypass Power | Low (Easily detected) | High, especially residential and ISP proxies |

| Maintenance | High (Self-managed) | Zero (Managed) |

When You Should Use Real Proxy Servers Instead

- Bulk web scraping: If you are scraping high-security domains, using dedicated proxy servers that can seamlessly rotate IPs is better than relying on Node unblockers.

- Precise geo-targeting: Tasks like ad verification and SEO monitoring require precise geo-targeting that is offered by dedicated proxy services with large IP pools. ProxyWing for instance, has over 70 millions IPs, giving users more flexibility to select the location that suits their needs.

- Bypassing Advanced Anti-Bot Shields: Many websites rely on advanced anti-bot systems like Cloudflare and DataDome that can easily detect a Node Unblocker request and block it instantly. In such cases, using residential IPs whose traffic resemble real users is a more reliable alternative.

- Multi-accounting: If you’re a digital marketer handling around 10 accounts, using a single IP of the node-unblocker server is risky and could potentially trigger account restrictions. In such cases, it is best to use dedicated mobile or residential proxies that allow you to assign each account a unique IP address, minimizing the chances of getting blocked.

Boost Node Unblocker with Reliable Proxies

Node unblocker can be used alongside proxies to enable more capabilities like IP rotation. Traffic from the node-unblocker server can be routed through proxies before being sent to the destination. ProxyWing offers high-speed residential and ISP proxies that provide the anonymity and automated IP addressing that is needed to bypass any block.

Ideal Use Cases

- Learning Projects: They can be used by those interested in learning how proxies work.

- Basic Unblocking: Accessing websites with less advanced anti-bot detection systems.

- Low-Volume Data Scraping: Data collection from small websites with less advanced security systems.

Article written by:

Full Stack AI Engineer

Alexandre brings deep full-stack expertise to Proxywing's engineering efforts — from backend architecture and performance optimization to AI-driven development workflows. His hands-on work spans Node.js, React, cloud infrastructure, and RAG pipelines, giving him a rare ability to tackle both proxy platform internals and user-facing product challenges. At Proxywing, Alexandre focuses on designing resilient systems, eliminating performance bottlenecks, and integrating modern AI tooling into the development process. Outside of coding, he's passionate about exploring the frontiers of AI engineering and building side projects that push his technical boundaries.

All articles by author (44)FAQ

Yes, web scraping with node-unblocker is legal as long as you comply with the target site’s terms of service and local laws.

Cloudflare uses advanced algorithms that can easily detect traffic from Node Unblocker. In its default setup, Node Unblocker does not include the sophisticated fingerprinting capabilities required to bypass such systems.

No, it uses one IP address by default. To enable IP rotation, you must integrate it with a dedicated proxy solution with a large IP pool.

Large scale projects usually require advanced IP rotation, which node-unblocker does not offer. This makes it only ideal for small scraping projects targeting less protected websites.

Yes, you can. To enable capabilities like IP rotation that are needed especially when scraping sites with advanced bot systems, using dedicated residential proxies is necessary.